Month: October 2021

#EduAI21

During our media literacy Twitter conference #SMILED21 I jokingly suggested that Pontydysgu had enough AI projects in progress to run a conference on its own. We haven't, quite, and a conference is no fun without the opportunity to find out, probe, query and be inspired by what everyone else is up to. So here it is;

#EduAI21 - Bridging the gap between research and practice for AI in education

An unconference style event run on Twitter, #EduAI21 aims to bring researchers and practitioners together, presenting accessible research, information, chalk-face experiences, real life case studies and cutting edge technologies around the use of Artificial Intelligence in (and for) education.

This is free and open to all, anyone with a Twitter account and an idea can contribute.

Presenters will be given a time-slot in which to tweet their 15 tweet presentation. Twitter limits how much text you can use meaning the information should be precise and to the point. Multimedia is highly encouraged and there's a gold star for the best gif. Everyone else is encouraged to grab a hot drink, interact, retweet, like, reply, ask questions and share your own ideas.

Can you present your AI in education case study, research, project, idea or resource in up to 15 tweets? Submit your idea below and we will get back to you with a timeslot and further details.

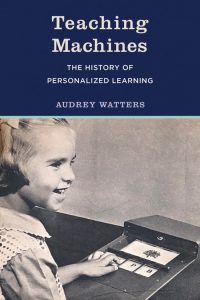

Teaching Machines

I've just finished reading Audrey Watters long awaited book, 'Teaching Machines'. I would have read it earlier but it is difficult to get,at least in Spain, taking four weeks to reach me courtesy of Blackwell in Oxford. And it is every bit as good as others have said. It is rare that I get so engrossed in what is for me a 'work book', but Audrey really is a very good writer. Anyway here are my eight main take aways from the book.

- Ed-tech is not a recent invention. There is a clear line of development between the mechanical teaching machines developed from the 1920s onward in the USA and the computer based Ed-tech we use today.

- OK - this is a US based history book. But it is interesting to note that the various companies making or thinking about making Teaching Machines were primarily or more commonly solely interested in the bottom line - ie potential profit and had little or no interest in education per se.

- There was already in the last century an ambiguity as to whether technology would replace teachers or was just there to assist them.

- Despite the importance of partnerships with industry to manufacture and distribute teaching machines, the driving force behind the development was academics, particularly from the then emergent discipline of psychology

- The predominant pedagogic approach was behaviorism with the teaching machines designed to support operant conditioning.

- The major motivation behind advocating the widespread adoption of teaching machines was the idea that the American education system was failing, particularly in languages, sciences and STEM subjects. This was given a huge boost when the Soviet Union beat the USA in first putting a satellite into space.

- Teaching machines were largely dependent on standardization and standardized testing in education, both of which were a response to the idea that education was failing

- There was no clear evidence that teaching machines actually led to improved learning and learning outcomes

All these things sound horribly familiar to me, having only twenty five or so years experience of the development of educational technology. But then, I guess that is why Audrey wrote the book in the first place. As a reviewer in Forbes said: "Reading this story, one suspects it might be fair to say that it is ed tech, not public education, that has not made a significant step forward in the last 100 years."

Are people better than AI?

I am struggling with the wealth of distractions that twitter and the web offer up each day. I've even taking to getting up earlier to have more time to read in the mornings. Anyway here is one of today's offerings, courtesy of Bloomberg Opinion. Parmy Olson has written an article entitled "Much Artificial Intelligence Is Still People Behind a Screen."

She says:

The practice of hiding human input in AI systems still remains an open secret among those who work in machine learning and AI. A 2019 analysis of tech startups in Europe by London-based MMC Ventures even found that 40% of purported AI startups showed no evidence of actually using artificial intelligence in their products.

One reason put forward is the premium being paid by potential investors to AI start up companies. Another is the need for large amounts of training data to develop machine learning applications. Some companies claim that the human behind the screen is merely monitoring the AI apps. "But in some cases," says Olson."these workers are doing more cognitively intensive tasks because the algorithms they oversee don’t work well enough on their own."

She points to the large numbers of content moderators employed by Facebook as evidence of the limitations of AI because their machine-learning algorithms don’t work well enough in stopping harmful content. But it is not just Facebook among big corporations who are employing humans instead of AI Amazon has the same problem with their popular MTurk application.

A major concern is the poor pay and working conditions for many of those employed in the data labelling industry.