AI Pioneers Action Lab at the EDEN Digital Learning Europe Conference in Graz

Last week at the EDEN Digital Learning Europe Conference in Graz. The AI Pioneers project organised an Action Lab. I wasn't quite sure what the idea of an Action Lab was but we agreed that it seemed to imply some involvement and participation from those attending. Neither did we know exactly how many would attend given that as in other large conferences their were a considerable number of parallel sessions.

So - in the end we decided we would deliver a very short presentation as an introduction to the AI pioneers project and then split into groups to discuss three key questions:

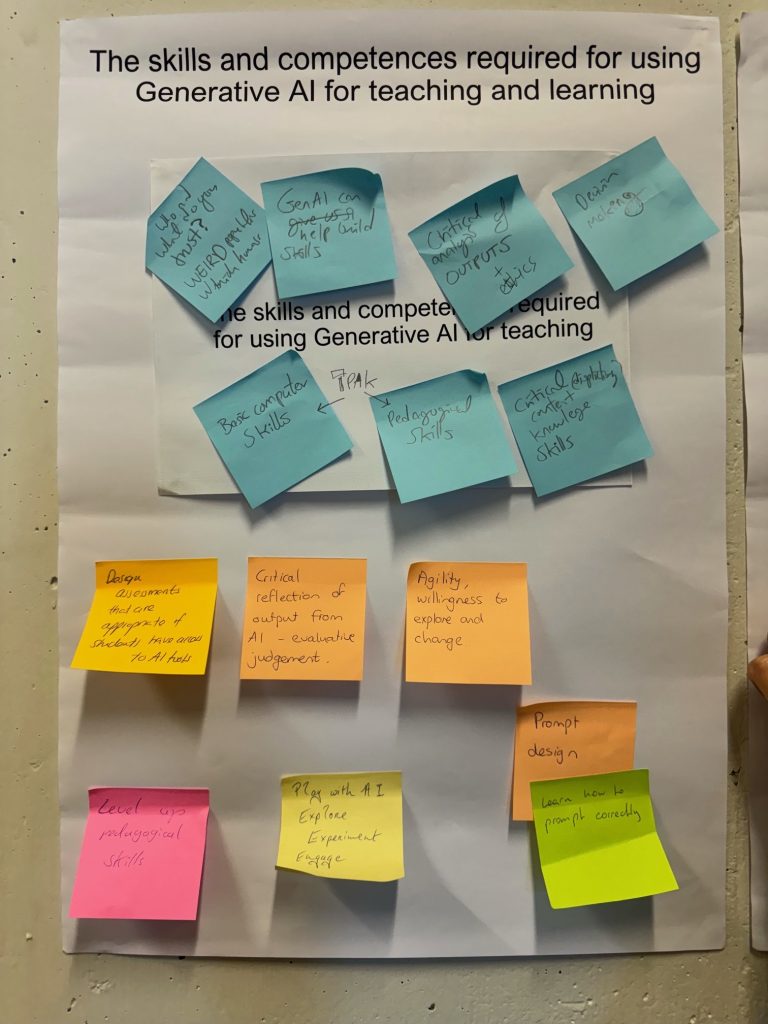

- The skills and competences required for using Generative AI for teaching and learning

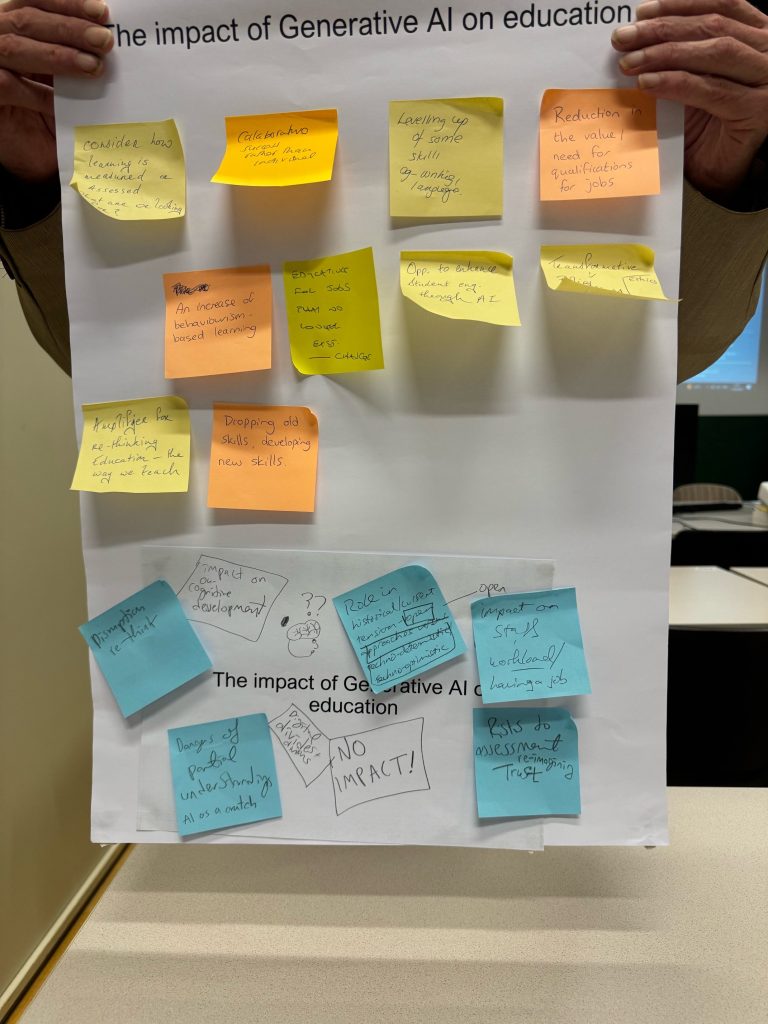

- The impact of Generative AI on education

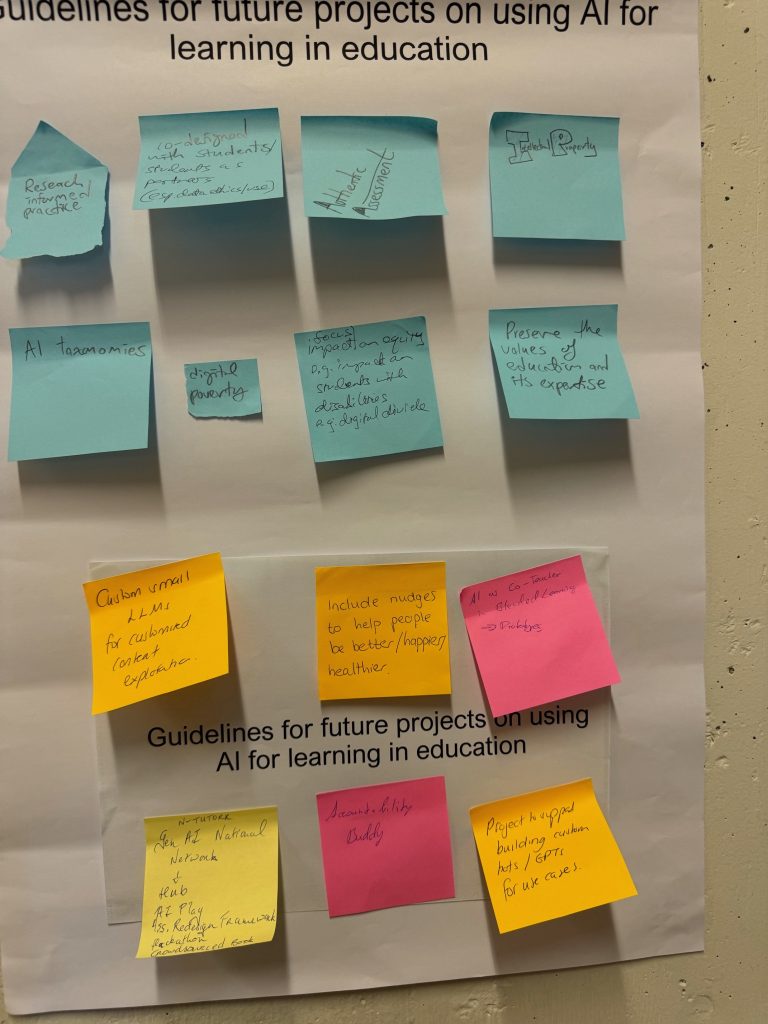

- Guidelines for future projects on using AI for learning in education

In the end nearly 30 people turned up - to a room which was better suited to 15! And we only had one hour for the Action Lab so it was quick fire stuff. Originally we had through about using a Miro board for collecting inputs but there was not enough time for that. Instead we went back to basics and collected the replies on post-it notes displayed on posters on the wall. We captured posters through mobile phone pictures.

The session was lively and a lot of fun (at least I thought so and others I talked to afterwards agreed. Of course a usual problem with a set up like this is how to record and analyse the discussion afterwards. No problems - since this is about AI I gave to to Claude 3.5 Sonnet with the following prompt:

Those stickers are responses in the question about the impact of AI in education from a workshop about AI in education. Can you extract the text from the stickers. Can you analyse and conclude ?

Here's what it came back with.

Skills and competences required for using Generative AI for teaching and learning:

- What do you want to achieve? WEIRD prompts

- GenAI can help build skills

- Critical analysis of outputs + ethics

- Domain understanding

- Basic computer skills

- TPAK (Technological Pedagogical Content Knowledge)

- Critical thinking and ethical skills

- Design documents and use appropriate AI skills, take notes to AI tools

- Critical reflection of output from AI - evaluative judgment

- Agility, willingness to explore and change

- Prompt design

- Level up pedagogical skills

- Play with AI, Explore, Experiment, Engage

- Learn how to prompt correctly

The impact of Generative AI on education:

- Consider how learning is assessed and how we define success

- Collaborative learning initiatives

- Leveling up of some skills or knowledge / language

- Reduction in the value/need for qualifications for jobs

- Possible increase of behaviorism-based learning

- Effective tool SaaS may no longer exist

- Opportunities to extend through AI

- Personalization of learning

- Amplifier for re-thinking Education - the way we teach

- Dropping old skills, developing new skills

- Disruption in trust

- Impact on our cognitive development

- Role in historical narratives + trust + misinformation

- Impact on staff workload/training on job

- Risks to assessment - cheating

- Danger of partial understanding AI as a crutch

- NO IMPACT!

Guidelines for future projects on using AI for learning in education:

- Research informed practice

- Co-design with students/teachers + partners

- Authentic Assessment

- Ethical Proofing

- AI taxonomies

- digital poverty

- Focus on innovation equity

- Preserve the values of education and its expertise

- Custom small LLMs for customized content exploration

- Include nudges to help people be better/happier/healthier

- AI as Co-Teacher + Blended Learning + Flippers

- AI Tutor for AI National Network of Hub AI Play fps, Research Financial, Pedagogy for all

- Accountability Buddy

- Project & support building custom kits / APIs for use cases

Analysis and Conclusion:

The sticky notes reveal a comprehensive exploration of AI's role in education, covering guidelines for implementation, necessary skills, and potential impacts. Here's an analysis of the key themes:

- Ethical Considerations: There's a strong emphasis on ethical implementation, including "Ethical Proofing" and preserving educational values. This suggests a cautious approach to AI adoption.

- Skill Development: Both educators and students need to develop new skills, such as prompt design, critical analysis of AI outputs, and understanding AI's capabilities and limitations.

- Personalization and Collaboration: AI is seen as a tool for personalizing learning experiences and fostering collaborative initiatives.

- Pedagogical Shifts: The notes indicate that AI may lead to changes in teaching methods, assessment practices, and the overall educational paradigm.

- Equity and Accessibility: There's concern about digital poverty and ensuring equitable access to AI-enhanced education.

- Potential Risks: Some notes highlight risks such as over-reliance on AI, impacts on trust, and potential for cheating.

- Workforce Implications: AI's impact extends beyond the classroom, potentially affecting job qualifications and workforce skills.

Conclusion: The overall sentiment towards AI in education is cautiously optimistic. While there's excitement about AI's potential to enhance learning experiences and personalize education, there's also a clear recognition of the challenges and risks involved. The focus is on responsible implementation, emphasizing the need for ethical guidelines, skill development, and maintaining human-centric values in education. The diverse perspectives represented in these notes suggest that the integration of AI in education will require careful planning, continuous assessment, and adaptability to ensure it benefits all stakeholders while mitigating potential drawbacks.