Wales Wide Web

How to be a trusted voice online

UNESCO have launched an online course in response to a survey of digital content creators, 73 per cent of whom requested training. According to UNESCO the course aims to empower content creators to address disinformation and hate speech and provide them with a solid grounding in global human rights standards on both Freedom of Expression and Information. The content was produced by media and information literacy experts in close collaboration with leading influencers around the world to directly address the reality of situations experienced by digital content creators.

The course has just started and runs for 4 weeks; over 9 000 people from 160 countries enrolled and are currently taking it. They will learn how to:

- source information using a diverse range of sources,

- assess and verify the quality of information,

- be transparent about the sources which inspire their content,

- identify, debunk and report misinformation, disinformation and hate speech,

- collaborate with journalists and traditional media to amplify fact-based information.

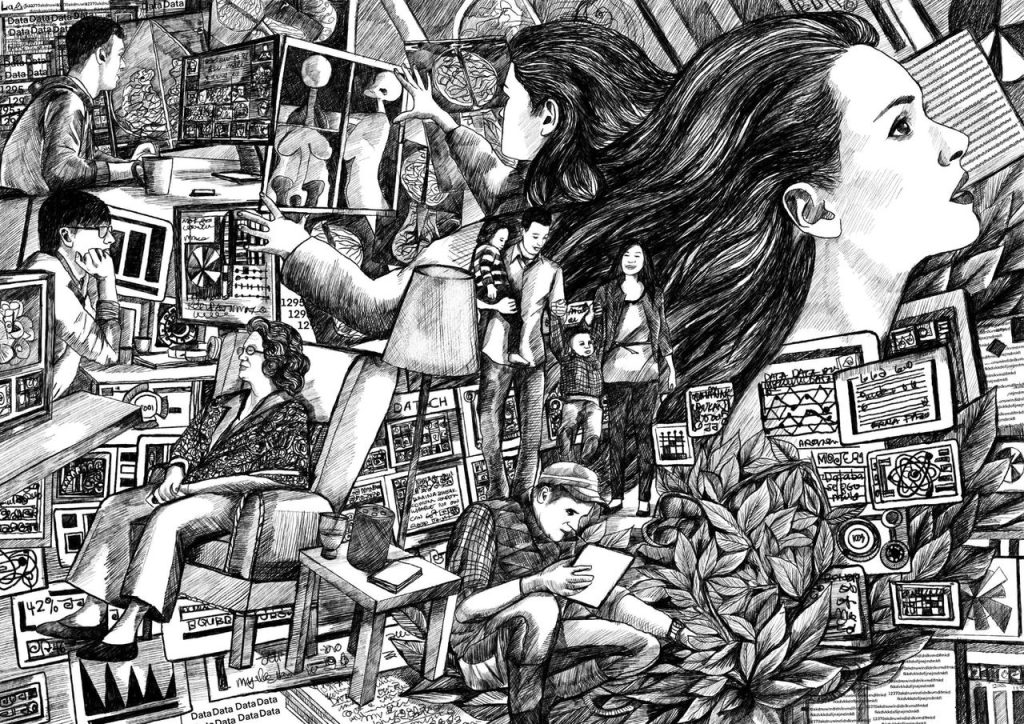

The UNESCO “Behind the screens” survey found that fact-checking is not the norm, and that content creators have difficulty with determining the best criteria for assessing the credibility of information they find online. 42% of respondents said they used “the number of ‘likes’ and ‘shares’ a post had received” on social media as the main indicator. 21% were happy to share content with their audiences if it had been shared with them “by friends they trusted”, and 19% said they relied “on the reputation” of the original author or publisher of content.

UNESCO says that although journalists could be a valuable aid for digital content creators to verify the reliability of their information, links and cooperation are still rare between these two communities. Mainstream news media is only the third most common source (36.9%) for content creators, after their own experience and their own research and interviews.

The survey also revealed that a majority of digital content creators (59%) were either unfamiliar with or had only heard of regulatory frameworks and international standards relating to digital communications. Only slightly more than half of the respondents (56.4%) are aware of training programmes addressed to them. And only 13.9% of those who are aware of these programmes participated in any of them.

Public Domain Data

Social generative AI for education

I am very impressed with a paper, Towards social generative AI for education: theory, practices and ethics, by Mike Sharples. Here is a quick summary but I recommend to read the entire article.

In his paper, Mike Sharples explores the evolving landscape of generative AI in education by discussing different AI system approaches. He identifies several potential AI types that could transform learning interactions: generative AIs that act as possibility generators, argumentative opponents, design assistants, exploratory tools, and creative writing collaborators.

The research highlights that current AI systems primarily operate through individual prompt-response interactions. However, Sharples suggests the next significant advancement will be social generative AI capable of engaging in broader, more complex social interactions. This vision requires developing AI with sophisticated capabilities such as setting explicit goals, maintaining long-term memory, building persistent user models, reflecting on outputs, learning from mistakes, and explaining reasoning.

To achieve this, Sharples proposes developing hybrid AI systems that combine neural networks with symbolic AI technologies. These systems would need to integrate technical sophistication with ethical considerations, ensuring respectful engagement by giving learners control over their data and learning processes.

Importantly, the paper emphasizes that human teachers remain fundamental in this distributed system of human-AI interaction. They will continue to serve as conversation initiators, knowledge sources, and nurturing role models whose expertise and human touch cannot be replaced by technology.

The research raises critical philosophical questions about the future of learning: How can generative AI become a truly conversational learning tool? What ethical frameworks should guide these interactions? How do we design AI systems that can engage meaningfully while respecting human expertise?

Mike Sharples concludes by saying that designing new social AI systems for education requires more than fine tuning existing language models for educational purposes.

It requires building GenAI to follow fundamental human rights, respect the expertise of teachers and care for the diversity and development of students. This work should be a partnership of experts in neural and symbolic AI working alongside experts in pedagogy and the science of learning, to design models founded on best principles of collaborative and conversational learning, engaging with teachers and education practitioners to test, critique and deploy them. The result could be a new online space for educational dialogue and exploration that merges human empathy and experience with networked machine learning.

Do we need specialised AI tools for education and instructional design?

In last weeks edition of her newsletter, Philippa Hardman reported on an interesting research project she has undertaken to explore the effectiveness of Large Language Models (LLMs) like ChatGPT, Claude, and Gemini in instructional design. It seems instructional designers are increasingly using LLMs to complete learning design tasks like writing objectives, selecting instructional strategies and creating lesson plans.

The question Hardman set out to explore was: “how well do these generic, all-purpose LLMs handle the nuanced and complex tasks of instructional design? They may be fast, but are AI tools like Claude, ChatGPT, and Gemini actually any good at learning design?” To find this out she set two research question. The first was sound the Theoretical Knowledge of Instructional Design by LLMs and the second to assess their practical application.She then analysed each model’s responses to assess theoretical accuracy, practical feasibility, and alignment between theory and practice.

In her newsletter Hardman gives a detailed account of the outcomes of testing the different models from each of the three LLM providers, But the The headline is that across all generic LLMs, AI is limited in both its theoretical understanding and its practical application of instructional design. The reasons she says is that they lack industry specific knowledge and nuance, they uncritically use outdated concepts and they display a superficial application of theory.

Hardman concludes that “While general-purpose AI models like Claude, ChatGPT, and Gemini offer a degree of assistance for instructional design, their limitations underscore the risks of relying on generic tools in a specialised field like instructional design.”

She goes on to point out that in industries like coding and medicine, similar risks have led to the emergence of fine-tuned AI copilots, such Cursor for coders and Hippocratic AI for medics and sees a need for “similar specialised AI tools tailored to the nuances of instructional design principles, practices and processes.”