technology

AI in Education – the question of hype and reality

Max Gruber / Better Images of AI / Banana / Plant / Flask / CC-BY 4.0

I have spent a lot of time over the past two weeks working on the first year evaluation report for the AI pioneers Web site. Evaluation seems to come in and out of fashion on European Commission funded projects. And now its in an upswing, partly due to the move to funding projects based on the products and results, rather than number of working days claimed.

For the AI Pioneers project, I have adopted a Participant oriented approach to the evaluation. This puts the needs of project participants as its starting point. Participants are not seen as simply the direct target group of of the project but will includes other stakeholders and potential beneficiaries. Participant-orientated evaluation looks for patterns in the data as the evaluation progresses and data is gathered in a variety of ways, using a range of techniques and culled from many different sources. Understandings grow from observation and bottom up investigation rather than rational deductive processes. The evaluator’s key role is to represent multiple realities and values rather than singular perspectives.

Hopefully we will be able to publish the Evaluation report in the early new year. But here are a few take aways, mainly gleaned from interview I undertook with each of the project partners.

The partners have a high level of commitment to the project. However the work they are able to undertake, depends to a large extent on their role in their organisations and the organisations role in the wider are of education. Pretty much all of the project partners, and I certainly concur with this sentiment, feel overwhelmed by the sheer volume of reports and discourse around AI in education and the speed of development especially around generative AI, makes it difficult to stay up to date. All the partners are using AI to some extent. Having said that there is a tendency to thing of Generative AI as being AI as a whole, and to forget about the many uses of AI which are not based on Large Language Models.

Despite the hype (as John Naughton in his Memex 1.1 newsletter pointed out this week AI is at the peak of the Gartner hype cycle, see illustration) finding actual examples of the use of AI in education and training and Adult Education is not easy.

A survey undertaken by the AI pioneers project found few vcoati0nal education and train9ing organisations in Europe had a policy on AI. There is considerable differences between different sectors - Marketing, Graphic design, computing, robotics and healthcare appear to be ahead but in many subjects and many institutions there is little actual movement in incorporating AI, either as a subject or for teaching and learning. And indeed, where there are initial projects taking place, this is often driven by enthusiastic individuals, with or without the knowledge of their managers.

This finding chimes with reports from other perspect6ives. Donald H Taylor and Egle Vinauskaite have produced a report looking at how AI is being used in workplace Learning and Development today, and concludes that it is in its infancy. "Of course, some extraordinary things are being done with AI within L&D," they say. "But our research suggests that where AI is currently being used by L&D, it is largely for the routine tasks of content creation and increased efficiency."

If there is one message L&D practitioners should take away from this report, it is that there is no need to panic – you are not falling far behind your peers, for the simple reason that very few are making major strides with AI. There is every need, however, to act, if only in a small way, to familiarize yourself with what AI has to offer.

Donald H Taylor and Egle Vinauskaite. Focus on AI in L&D, https://donaldhtaylor.co.uk/research_base/focus-on-ai-in-ld/

Indeed, for all the talk of the digital transformation in education and training, it my be that education, and certainly higher education, is remarkably resistant to the much vaunted hype of disruption and that even though AI will have a major impact it may be slower than predicted.

Top tools for Learning

Photo by ThisisEngineering RAEng on Unsplash

Jane Hart from the Centre for Learning & Performance Technologies has published the seventeenth in her annual survey of the Top 100 Tools for Learning. There were 2,022 votes in the survey drawn from both the education sector and industry. There was little change or surprise in the top three" YouTube, Google Search and Microsoft Teams. And perhaps there should be no surprise in the fourth top tool with ChatGPT leaping in from nowhere the year before. Other big winners in this years survey were Netflix, Grammarly and Tiktok. Jane explained that this year there were more votes from the education sector than last year, leading to many of the educational tools have regaining lost ground over more productivity and workflow tools on this year’s list.UNESCO AI Competency Framework for Teachers

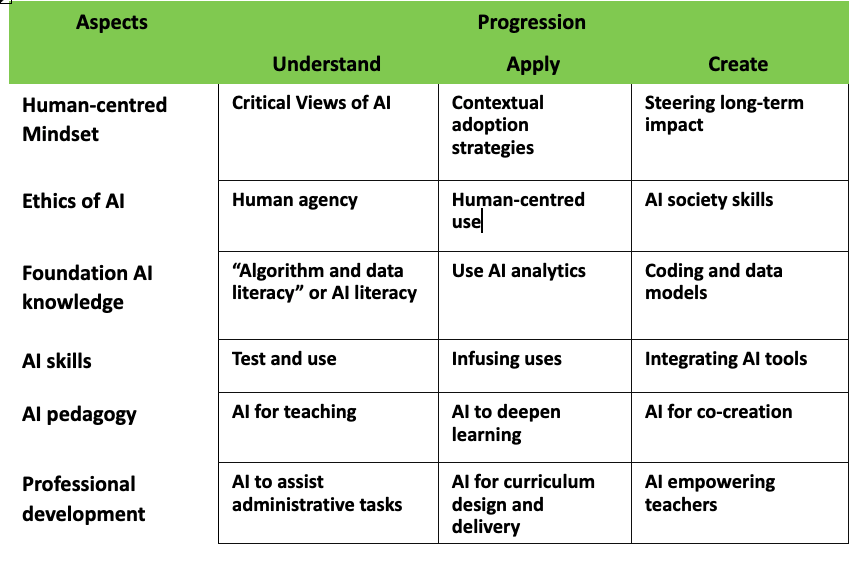

The AI CFT is targeted at a wide-ranging teacher community, including pre-service and in-service teachers, teacher educators and trainers in formal, non-formal education institutions, policymakers, officials and staff involved in teacher professional learning ecosystems from early childhood development, basic education, to higher and tertiary education.... The purpose of the AI CFT is to provide an inclusive framework that can guide teachers, teaching communities and the teacher education systems worldwide to leverage the educational affordances of AI, and develop the critical agency, knowledge, skills, attitudes and values needed to manage the risks and threats associated with AI. It promotes the responsible, ethical, equitable and inclusive design and use of AI in education.The draft discussion document provides a diagram of a High-level Structure of the proposed AI Competency Framework for Teachers.

What will happen to jobs with the rise and rise of Generative AI

OK where to start? First what is Generative AI? It is the posh term for things like ChatGPT from OpenAI or Bard from Google. And these Generative AIs based on Large Language Models are fast being integrated into all kinds of applications starting out with the chatbot integrated into Microsoft Bing browser and Dalll-E just one of applications generating images from text or chat descriptions.

Predicting what will happen with jobs is a tricky business. Jobs have been threatened by successive waves of technology. In general the overall effect on employment appears to have been less than was predicted. Of course there was a vast shift in employment with the advent of mechanization in agriculture but that took place around the end of the 19th century at least in some countries. And its pretty easy to find jobs that have disappeared in recent times - for instance employment in video shops. But in general it appears that disruption has been less than predicted in various surveys and reports. Technology has been used to increase productivity - for example in shops using self checkouts and automated stock management - or has been used to complement working processes and tasks rather than substitute for workers and the generation of new jobs to work with the technology

But what is going to happen this time round with all sorts of predictions and speculation - not helped by no-one quite knowing what Generative AI is capable of and even harder what it will be able to do in the very near future. Bill Gates (the founder of Microsoft) has said the development of AI is as fundamental as the creation of the microprocessor, the personal computer, the Internet, and the mobile phone. There is too much press and media speculation to even sum up the general reaction to the release of these new AI models and applications although Stephen Downes is making a valiant attempt in his OLDaily newsletter. Personally I enjoyed UK restaurant critic, Jay Raynor's account in the Guardian newspaper of when he asked ChatGPT to write a restaurant review in his own inimitable style. Of course, along with concerns over the impact on employment and jobs, there is much concern over the ethical implications of the new AI models although it is worth noting Ilkka Tuomi writing on LinkedIn (his posts are well worth following) has noted that the EU has been an early mover in policy and regulation. Ilkka also, while noting that education (and teaching) is more than just knowledge transformation, says "dialogue and learning by teaching are very powerful pedagogical approaches and generative AI can be used in many different ways in learning and education:. He concludes by saying: "This really could have a transformative impact."

Anyway back to the more general impact on jobs which is an issue for the new EU AI Pioneers project which focuses on the impact on Vocational Education and Training and Adult Education. Last weekend saw the release of a report by Goldman Sachs predicating that as many as 300 million jobs could be affected by generative AI and the labor market could face significant disruption. However they suggest that :most jobs and industries are only partially exposed to automation and are thus more likely to be complemented rather than substituted by AI". In the US they estimate 7% of jobs could be replaced by AI, with 63% being complemented by AI and 30% being unaffected by it. Perhaps one of the reasons for so much concern is that this wave of automation seems to be most likely to impact on skilled work with, say Goldman Sachs, office and administrative support positions at the greatest risk of task replacement (46%(, followed by legal positions (44%) and architecture and engineering jobs (37%).

What I found most interesting from the full report (rather than the press summaries) is the methodology. The report includes a quite detailed description. It says:

Generative AI’s ability to 1) generate new content that is indistinguishable from human-created output and 2) break down communication barriers between humans and machines reflects a major advancement with potentially large macroeconomic effects.

The report is based on "data from the O*NET database on the task content of over 900 occupations in the US (and later extend to over 2000 occupations in the European ESCO database) to estimate the

share of total work exposed to labor-saving automation by AI by occupation and industry." They assume that AI is capable of completing tasks up to a difficulty of 4 on the 7-point O*NET “level” scale and

"then take an importance- and complexity-weighted average of essential work tasks for each occupation and estimate the share of each occupation’s total workload that AI has the potential to replace." They "further assume that occupations for which a significant share of workers’ time is spent outdoors or performing physical labor cannot be automated by AI."

What are the implications for Vocational Education and Training and Adult Education? It seems clear that very significant number of workers are going to need some form of training or Professional Development - at a general level for working with AI and at a more specific level for undertaking new work tasks with AI. There is little to suggest present education and training systems in Europe can meet these needs, even if we expect a ramping up of online provision. The EU's position seems to be to push the development of Microcredentials which according the the EU Cedefop agency "are seen to be fit for purposes such as addressing the needs of the labour market, lifelong learning, upskilling and reskilling, recognising prior learning, and widening access to a greater variety of learners. Yet in their recent report, they say that

"Microcredentials tend to be a flexible, demand-driven response to the need for skills in the labour market, but they can lack the same trust and recognition enjoyed by full qualifications. In terms of whether and how they might be accommodated within qualification systems, they can pose important questions about how to guarantee their value and currency without undermining both their own flexibility and the stability and dependability of established qualifications."

The need for new skills for AI pose a question for how curricula can be adapted and updated faster than has been done traditionally. And they pose major questions for institutions to adapting course provsion to to new skill needs at a local and regional level as well as national. Of course there are major challenges for the skills and competences of teachers and trainers, who, the AI and VET project found, were generally receptive to embracing AI for teaching and learning as well as new curricula content, but felt the need for more support and professional training to update their own skills and knowledge (and this was before the launch of Generative AI models.

All in all, there is a lot to think about here.